Osaurus Transforms Mac into AI Powerhouse with Hybrid Local-Cloud LLM Server

Table of Contents

Osaurus Transforms Mac into AI Powerhouse with Hybrid Local-Cloud LLM Server

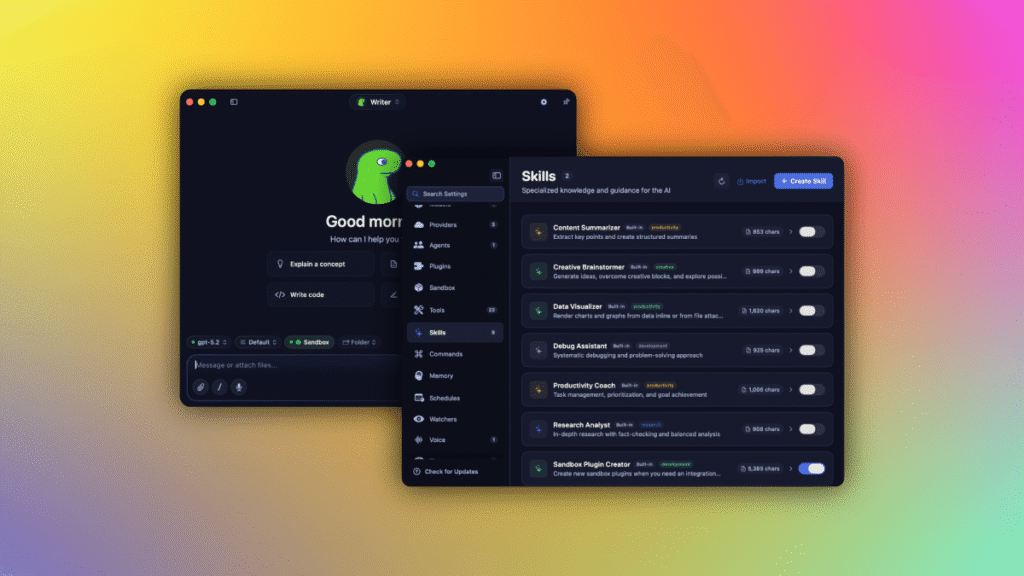

As artificial intelligence models become increasingly commoditized, the battleground has shifted from the models themselves to the software layers that manage them. Entering this fray is Osaurus, an open-source, Apple-exclusive LLM server designed to give Mac users unprecedented control over their AI workflows by blending local execution with cloud flexibility.

Unlike traditional AI interfaces that lock users into a single ecosystem, Osaurus acts as a sophisticated “harness.” It allows users to pivot between high-performance cloud models and private local models while keeping critical files, tools, and memory anchored to their own hardware. This hybrid approach solves the primary friction point for power users: the trade-off between the raw intelligence of the cloud and the absolute privacy of on-device processing.

- Main Update: Launch of a hardware-isolated, virtual sandbox for secure LLM execution.

- Key Feature: Full MCP (Model Context Protocol) server support with 20+ native plugins.

- Compatibility: Supports Apple Silicon, Llama, DeepSeek V4, and cloud APIs (OpenAI, Anthropic).

- Hardware Requirement: Minimum 64GB RAM for local models; 128GB recommended for large-scale LLMs.

The Evolution from AI Companion to Infrastructure

Osaurus didn’t start as a server; it evolved from a conceptual AI companion named Dinoki. Co-founder Terence Pae, a former engineer at Tesla and Netflix, envisioned Dinoki as a modern, functional evolution of the “AI-powered Clippy.” However, early feedback revealed a systemic problem: users were frustrated by the “token tax”—the recurring costs associated with cloud-based AI processing.

This realization shifted the project’s trajectory. Pae recognized that the Mac—specifically those with Apple Silicon—is uniquely positioned to handle AI workloads. By moving the intelligence local, users could browse files, manage system configurations, and execute tasks without sending sensitive data to a third-party server. This pivot transformed Osaurus from a simple app into a comprehensive infrastructure layer for personal AI.

Breaking the “Token Tax” with Local Execution

The primary driver for adopting local LLM deployment is the elimination of per-token pricing and the reduction of latency. By hosting models locally, Osaurus ensures that the user’s data never leaves the machine, which is critical for professionals in legal or healthcare sectors.

- Data Sovereignty: Files and system tools remain on-premise.

- Cost Efficiency: Zero per-prompt fees when using local weights.

- Customization: Ability to swap models based on the specific task (e.g., coding vs. creative writing).

Technical Architecture and Security

For developers, Osaurus is an MCP (Model Context Protocol) server, meaning it can expose a wide array of tools to any MCP-compatible client. It ships with over 20 native plugins covering everything from Mail and Calendar to Git and File System access. This makes the AI not just a chatbot, but an operator capable of interacting with the macOS ecosystem.

Security has been a major hurdle for previous “harness” tools like OpenClaw. Osaurus addresses this by implementing a hardware-isolated virtual sandbox. This ensures that even if an AI model attempts an unauthorized system call, it is contained within a secure environment, preventing potential malware or accidental data deletion.

Model Compatibility Matrix

Osaurus is designed for extreme flexibility, supporting a massive range of both open-source and proprietary models. The following table outlines the current compatibility landscape:

| Integration Type | Supported Models/Providers | Primary Use Case |

|---|---|---|

| Local / On-Device | Llama, DeepSeek V4, Gemma 4, Qwen3.6, Liquid AI LFM | Privacy, Offline Work, Basic Logic |

| Cloud API | OpenAI, Anthropic, Gemini, Grok, Venice AI | Complex Reasoning, High-Token Windows |

| Intermediate | Ollama, LM Studio, OpenRouter | Rapid Testing, Model Prototyping |

Why Local AI Matters for the Future

The current AI boom has led to an explosive demand for massive data centers, which are both energy-intensive and environmentally taxing. Pae and co-founder Sam Yoo argue that the “intelligence per wattage” is increasing rapidly. As local models become more efficient, the need for centralized cloud infrastructure may diminish.

By deploying a Mac Studio on-prem, a business can achieve cloud-level capabilities without the dependency on a remote data center. This shift not only improves data privacy standards but also democratizes AI access by removing the reliance on subscription-based API gateways.

What Happens Next for Osaurus?

Currently participating in the New York-based Alliance accelerator, the Osaurus team is looking beyond the enthusiast market. The roadmap includes specialized iterations for high-compliance industries, such as healthcare and law, where local LLMs are not just a preference but a regulatory necessity.

With over 112,000 downloads in its first year, the momentum is clear. As Apple’s M-series chips continue to evolve with larger Neural Engines, Osaurus is positioned to be the primary operating system for the local AI era.

Source: Official Osaurus project documentation and company statements from founders Terence Pae and Sam Yoo.