Shocking $66 Billion Debut: Cerebras Disrupts AI Chip Market in 2026 IPO

Table of Contents

In a seismic shift for the semiconductor industry, Cerebras Systems has successfully launched the first major public offering of 2026, securing a staggering $66 billion valuation. The company, which has spent a decade challenging the traditional GPU paradigm, raised $5.55 billion during its initial public offering (IPO) on Thursday, with shares surging nearly 70 percent in the first day of trading on the NASDAQ under the ticker CBRS.

- Market Value: $66 Billion upon IPO debut

- Capital Raised: $5.55 Billion

- Core Tech: Wafer-Scale Engine (WSE-3)

- Key Partner: G42 (UAE-based cloud provider)

- Performance: 2,200+ tokens per second for large models

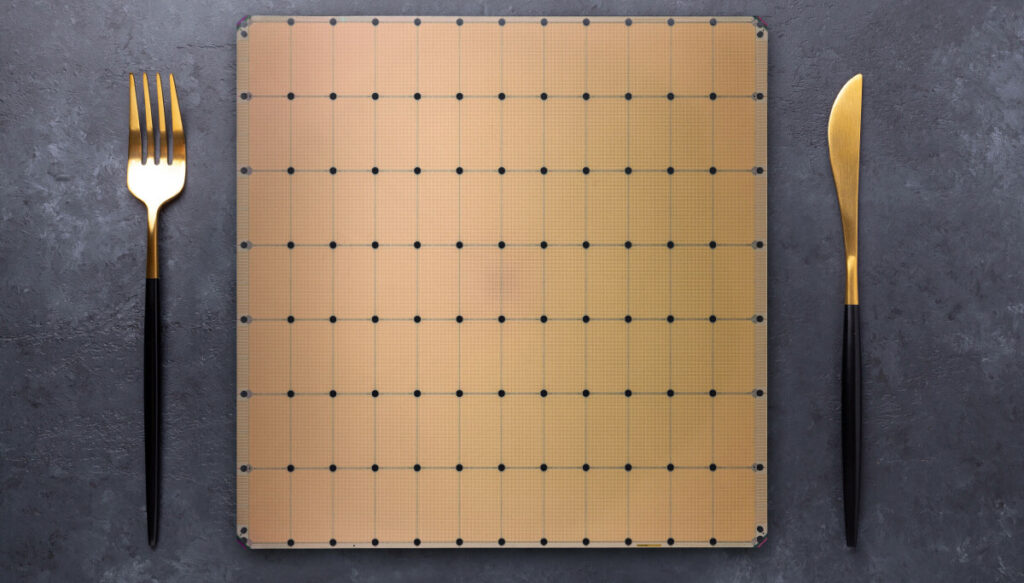

Engineering the ‘Dinner Plate’ Revolution

While traditional AI accelerators from companies like Nvidia rely on cutting silicon wafers into smaller dies and stitching them together using interconnects, Cerebras took a radically different path. Founded in 2015 by Andrew Feldman, the former head of SeaMicro, the company questioned why the industry wasted resources breaking chips apart only to reconnect them.

Their solution was the Wafer-Scale Engine (WSE), a monolithic chip the size of a dinner plate, measuring 46,225 square mm. This massive architecture allows for unprecedented compute density. By utilizing on-chip SRAM instead of external HBM memory, Cerebras bypassed the typical data bottlenecks that plague standard GPUs. This architectural gamble paid off, allowing the company to scale from its first-generation chip to the latest WSE-3, which now delivers a massive 125 petaFLOPS of sparse compute.

From Niche Training to Inference Dominance

For years, Cerebras was primarily known as a powerhouse for training massive AI models. The company gained significant traction through a strategic partnership with G42, the UAE-based cloud provider. This relationship led to the development of the Condor Galaxy cluster, creating a footprint of nine supercomputing sites across the globe.

However, the real catalyst for the current valuation is the company’s pivot toward inference-as-a-service. The sheer memory bandwidth of the WSE-3—reported at 21 PB/s—is nearly 1,000 times faster than Nvidia’s newest Rubin GPUs. According to data from Artificial Analysis, Cerebras can generate over 2,200 tokens per second when running GPT-OSS 120B High, outperforming the fastest closed GPU clouds by nearly 2.8 times. This speed has attracted a prestigious roster of clients including Meta, Mistral AI, Perplexity, and OpenAI.

The Strategic Pivot and Market Impact

The road to the NASDAQ was not linear. Andrew Feldman previously withdrew an S-1 filing in September 2024 after realizing the company’s revenue was too heavily concentrated, with G42 accounting for 87 percent of earnings. By waiting until 2026, Cerebras was able to showcase a diversified revenue stream fueled by its inference platform and new partnerships with AWS and Cognition.

This move matters because it proves that the ‘wafer-scale’ approach is commercially viable at scale. It breaks the monolithic grip Nvidia has on the AI hardware market by offering a legitimate alternative for enterprises requiring ultra-low latency and high-throughput LLM performance.

Future Outlook for AI Hardware

Looking ahead, the industry expects Cerebras to aggressively expand its inference-as-a-service footprint. While Nvidia continues to dominate the general-purpose GPU market, the specialized nature of Cerebras’ hardware positions it as the primary choice for high-speed generative AI. Analysts expect the company to focus on further reducing the cost-per-token, potentially triggering a price war in the AI cloud sector as they leverage their massive SRAM advantages to undercut competitors.